In late October, Joey Shulman, our UX Manager, settled into his chair to interview an Otus user. I don’t know for sure, but I suspect Joey was prepared to hear positive feedback about how easy Otus was to use and how helpful it was for K-12 teachers. I’m pretty sure he was prepared to also hear feedback about the hopes and dreams for Otus- all the things that this user wanted the product to have. This is not what happened.

At the conclusion of the user interview, Joey sent us one of the most scathing emails I have ever read. In short, our assessment module, which was designed to be robust, flexible, and powerful, was driving our longtime champions absolutely crazy. In Joey’s words, “If we don’t address our inability for teachers to be able to quickly create assessments, we better prepare our resumes because Otus has serious problems.”

At Otus, we try to take negative feedback in context. “This was just one user,” I told myself. With that, our chief product officer, Chris Hull, and I walked over to our trusted colleagues on our client services team and asked the question, “are assessments easy to create in Otus?” The response from the team was clear: NO! As a matter of fact, creating assessments was the biggest pain point in our platform. This needed to change.

We were too invested in an expensive decision

How did we end up here? We spent so much time listening to user feedback when we redesigned our assessment platform that we lost sight of our overarching goal: to make teachers’ lives easier. We had partnered with the premier item bank to give users the ability to create assessment items quickly, and we had utilized the best assessment engine on the market, which allowed our users to choose from over 65 types of items.

Upon reflection, we didn’t listen to the right feedback. We asked folks what they wanted in an assessment platform, but didn’t ask them what they needed in an assessment platform. They needed quick and easy, we made robust and deep. We didn’t ask the right questions to the people who rely on Otus day-in and day-out, which is 90% of our users.

The process of fixing a mistake

Thanks to our creative director, Jordan Walker, we made the decision to make some big changes. We gathered folks from our design, product, support, sales, and development teams to address the issue. We decided that the task was simple: give teachers a way to create a 10-item assessment that connects the items to learning standards, allows teachers to assign it to students or groups of students, and does it all within five minutes.

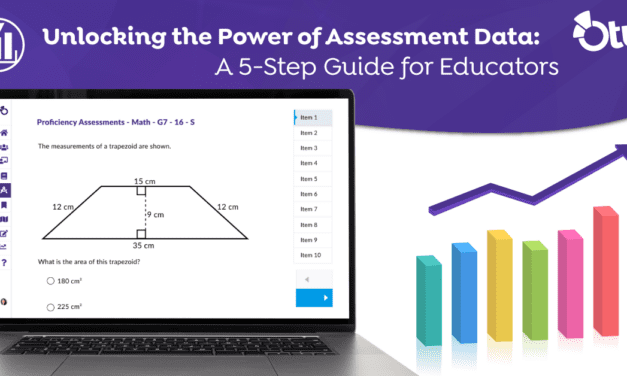

With a user story in hand, and a common understanding of that user story, we went to work. After wireframes and user interviews (including with the person who gave us the news we didn’t want to hear), designs, iterations, and testing, we proudly released a new feature called Simple Assessment. The Simple Assessment feature allows users to quickly create, assign, and act on student responses in real-time.

How user data helped us make better product development choices

By looking at the data, we learned that 87% of the items that our users were using when creating classroom assessments were either multiple choice, true/false, or short answer. While our 65+ item types are cool, less than 2% of our users used them! In the end, the data analytics we tout with Otus helped us to finally see what our users actually want to see from us.

With Simple Assessment, we have made Otus easier to use for our users. And we intend on adding even more item types to our Simple Assessment in coming months.

For our users who need the more advanced 65+ item types, we have moved those items into their own area: Advanced Assessments.

So, users, where do we go from here? If anything is certain, you can be sure that our roadmap is, and always will be, in your court!